About six months ago, Chinese conglomerate Alibaba released technology that allows customers to pay for goods via facial recognition. The tech giant, now worth over 500 billion dollars, chose KFC as the testing ground for their new payment method; a logical move, considering Alibaba is invested in Yum! China, the company responsible for every KFC, Taco Bell, and Pizza Hut operating within the country.

This “Smile to Pay” method is possible because of Face++, a company that focuses on facial and body recognition technology. And the commercial sector isn’t the only area that’s investing heavily in facial recognition tech in China. There are train stations in Beijing that use facial recognition (based off of government IDs) to print out tickets, and many office buildings (including Alibaba’s headquarters) are phasing out key cards in favor of this newer security measure. Still, the most common usage of facial

As early as last August, Chinese police forces in Hangzhou, a city with a population comparable to New York, began using surveillance cameras fitted with this technology to identify suspects. Recently, Chinese police officers began using electronic sunglasses fitted with facial recognition software. These glasses allow officers to access a database and pull up information on any person that comes into their line of sight. While this technology seems like it belongs in a Ridley Scott movie, it’s here now. And it’s important for us to recognize its political and social implications.

You don’t have to be a luddite to spot the dangerous precedent set by this new technology. When police officers can access your personal data on the fly, it’s certainly reasonable to wonder about your civil rights. Still, this technology doesn’t seem to be the privacy-erasing apocalypse that haunts the dreams of libertarians everywhere. It’s helped police officers in China identify people involved in kidnappings and hit and runs, as well as scammers using fake IDs. With regard to privacy, the pros to using this technology seem to outweigh the cons. Where this tech becomes an issue, is in its inability to deal with nuance. For example, authorities in Shenzhen City are using facial recognition to automatically issue fines (via text) to jaywalkers. This technology will also keep records on repeat offenders, and has the potential to affect their credit scores.

Officers in China review footage using facial recognition software

Officers in China review footage using facial recognition software

The issue this technology presents is similar to that of traffic cameras. Before they were banned in New Jersey, these cameras would issue tickets for running red lights and making illegal turns. The issue was, that these cameras were programmed to operate within the strictest possible parameters. They followed the law to a tee. Since the program was completely automated, there was no way for the cameras to look at each case individually. Tickets were shot out at a rapid clip, arriving by mail to anyone who so much as made a right turn a second after the light turned red. From a government funding standpoint, it was a slam dunk, and the towns that put these traffic cams up made a ton of money from issuing the tickets, but the public outcry against the cameras was huge. While China has a much more authoritarian social structure than we do in the States, it’s doubtful that the people of Shenzhen City will embrace this new system of doling out fines.

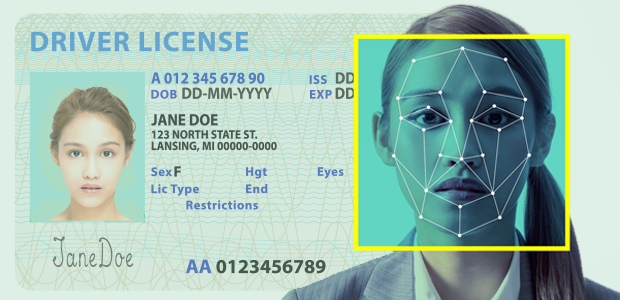

A facial recognition programs scans the face of a passerby

A facial recognition programs scans the face of a passerby

As usual, the fundamental issue with this new tech isn’t something deliberately insidious by design, nor is it the way in which it’s used by law enforcement. The real problem, as is the problem with all automated technologies, is its inability to replicate human decision making. There’s no amount of programming that will allow this technology to distinguish subtle differences between offenders. There’s a reason why we shouldn’t let algorithms run our police departments; it’s impossible to account for the nearly infinite amount of variables that go into human behavior. While there are certainly patterns, if we rely too heavily on these machines, we set the precedent that their programming is superior to our officers’ powers of deduction. Machines are fundamentally tools that help us complete jobs-they can’t do the jobs for us.

Still, this new facial recognition software is too useful and frankly too cool, to be left inside the box. Companies, as well as law enforcement agencies, are going to use this technology one way or another. There’s already a burger chain in the US that’s using the “Smile to Pay” method, and it’s only a matter of time before our law enforcement agencies begin to catch on, if they haven’t already. In implementing this new technology it’s important that our police don’t mistake the tool for the worker.- Facial Recognition Is Only the Beginning: Here’s What to Expect … ›

- Facial Recognition Is Accurate, if You’re a White Guy – The New York … ›

- Facial recognition technology can now text jaywalkers a fine | New … ›

- Face Recognition Technology | American Civil Liberties Union ›

- Subaru will use facial recognition technology to detect driver fatigue … ›